Concerns about SSD reliability debunked (again)

Update: Empirical evidence to go with the theoretical numbers.

Summary: It checks out; SSDs last a very long time.

Background

The myths about how you should use an SSD, and what you should not do with it keep on spinning. Even if there are frequent articles which crunch the actual numbers, the superstition persists. Back in 2008, Robert Penz concluded that your 64 GB SSD could be used for swap, a journalling file system, and consumer level logging, and still last between 20 and 50 years under extreme use.

Fast forward to 2013, with 120 and 240 GB drives becoming affordable, the problem should have virtually disappeared from consumer grade hardware, but people are still worried. So when Magnus Deininger did some estimates on SSD stress testing, he got flack from Slashdot since he did not cover the consumer level disks. The write endurance and number of estimated write cycles on a single block before it goes bad varies widely between consumer and enterprise grade disks, ranging from only 1000 cycles to a million. This article from Centon explains why that is. As can be seen from the simplified figure below, the cheaper consumer drives using "TLC" (Three Layer Cell) or "MLC" (Multi Layer Cell) memory cram the data a lot closer, and thus degrade quicker than enterprise grade "SLC" (Single Layer Cell) memory.

Stress test of consumer SSD

Deininger concerned himself only with the high end drives, with 100k to 1M write cycles, while most folks over at Slashdot seems to have the low end ones, at 1k - 10k write cycles, and thus the furore. However, Deininger's estimates were also skewed against the high end drives, since he used the maximum write speed of the SATA 3 controller, which at 6 Gbit/s (750 MByte/s) is lot more than the ~500 MB/s a typical SSD is rated for, or the ~250 MB/s you probably get out of it on a consumer system. And even that is still estimates for a stress tests, and does not even start to model a typical consumer usage pattern.

Deininger goes into detail on how he came up with the estimates, and also how to plot his graphs in Gnuplot. So based on that, let's run a few numbers, covering the 10k and 1k disks and typical use. However, let's first drop the stress test write speed down from max controller speed to typical system speed of 250 MB/s. Also, for his plots, he uses multiples of 1024, which for flash memory based drives might be correct, but is not universally used; for example Intel specifies in GB (base 10), while OCZ in GiB (base 2). For transfer speed, this is wrong, as base 10 is the norm. Although it does not make a big difference, I've changed to base 10 numbers.

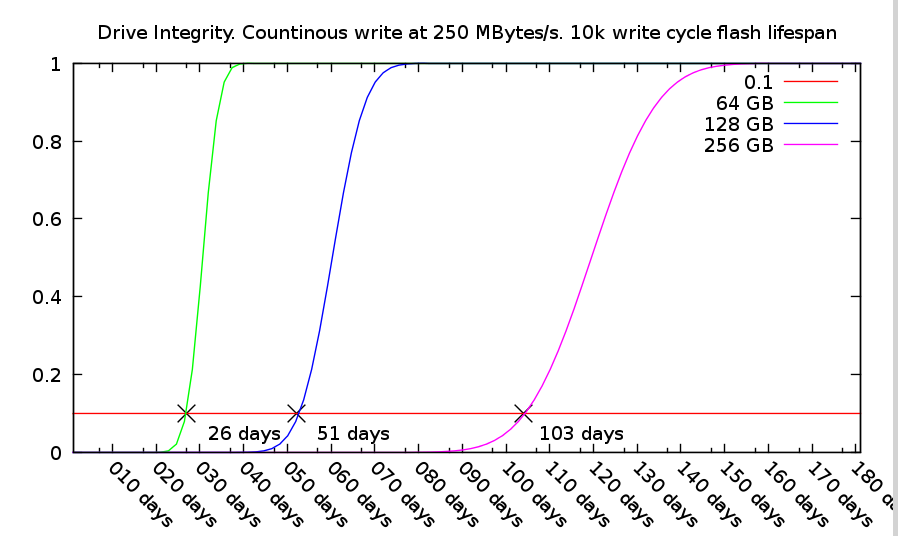

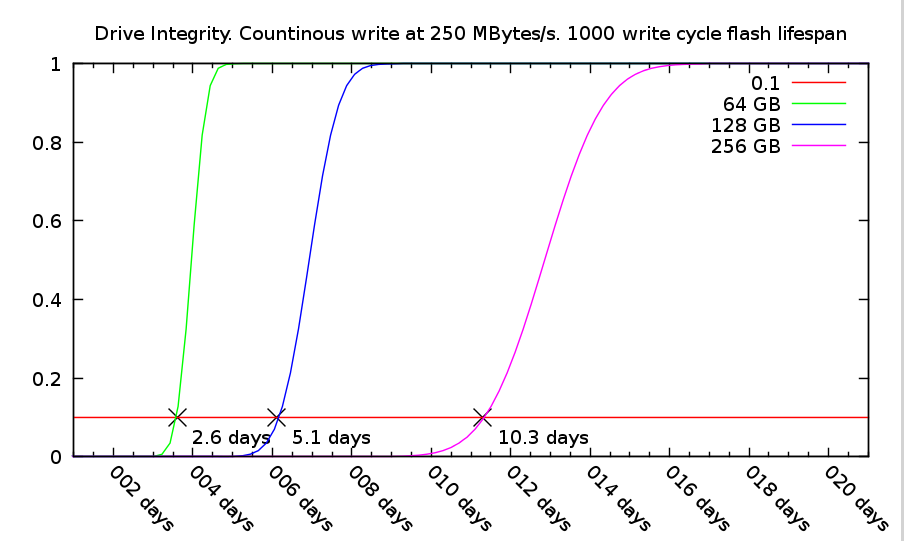

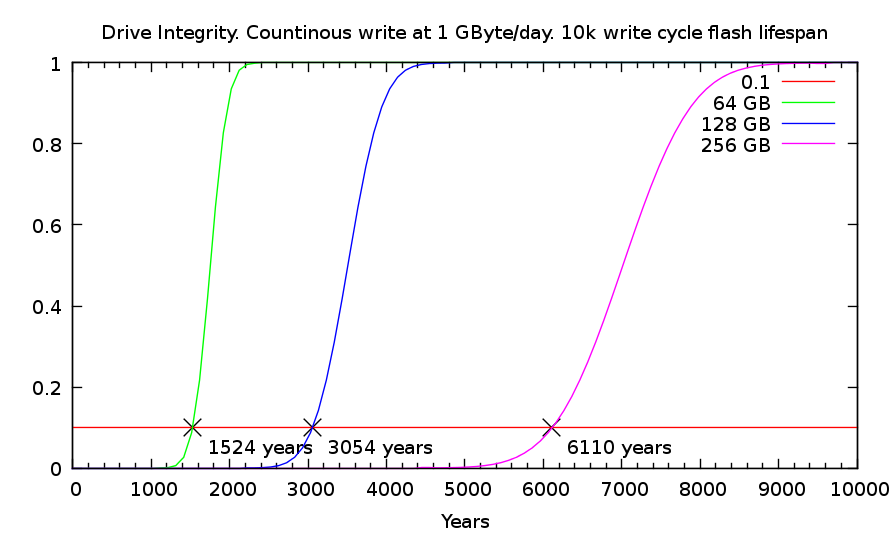

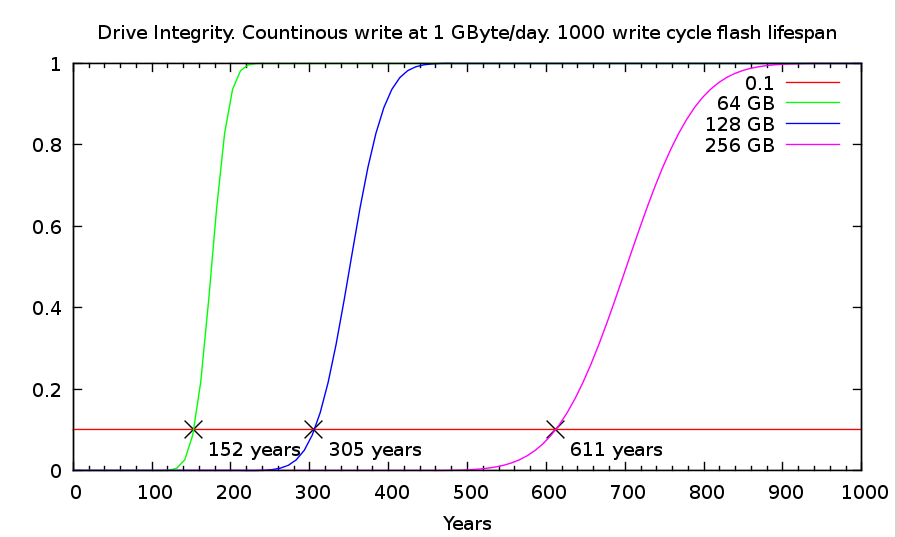

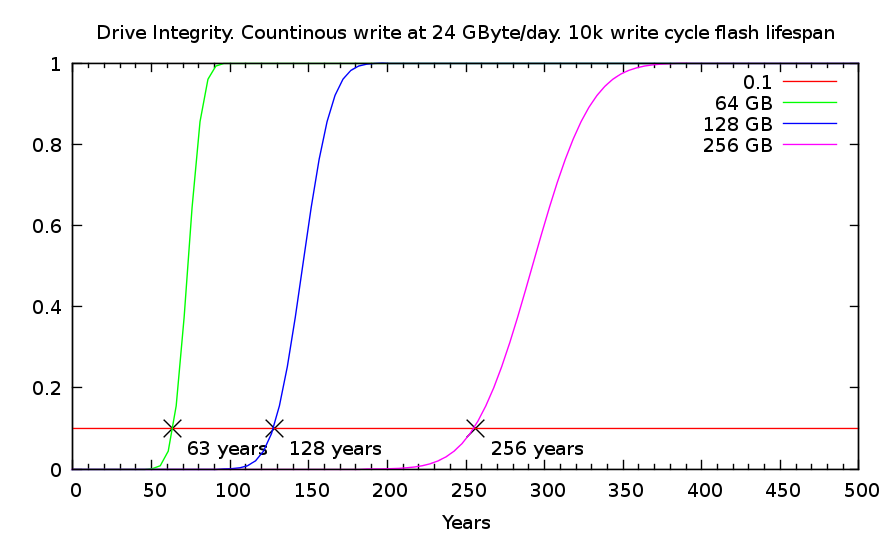

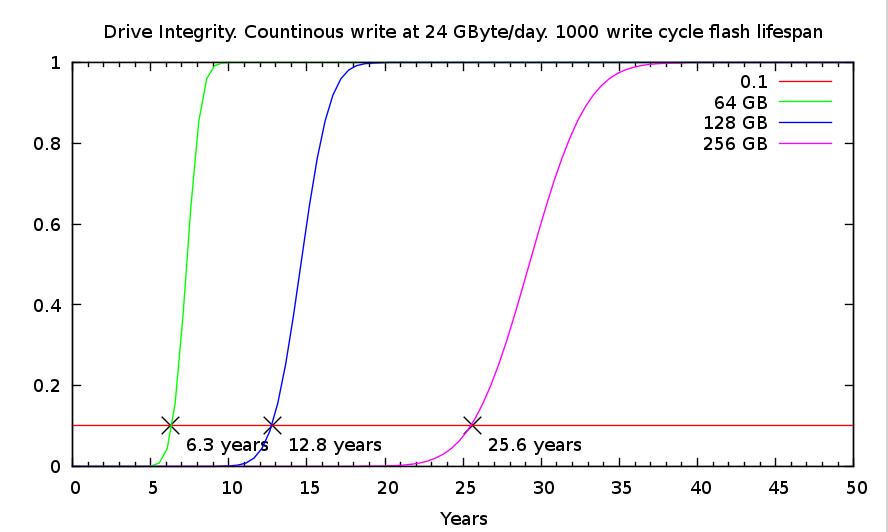

The graphs show time on the x-axis (days on the two first, and years in the next section), and the fraction of broken memory cells (or blocks) on the y-axis. That is, from 0 damaged cells, to 100% or all of them at the top. A horizontal lines marks the 10% point in all graphs since this is usually the point where damaged cells will be visible to the end user. Before that, the internal write levelling on the disk controller will hide these cells, since most disks come with about 10% space reserved for this. (Thus a disk with 128 GiB space is sold as 120 GB, and 256 GiB is sold as 240 GB).

First, there are a few fundamentals based on Deininger equations which can be seen in his examples, and also becomes clear in the graphs above: Doubling storage capacity of the drive doubles the time to failure (at the 10% line). And increasing flash lifespan by a factor of ten also increases time to failure by a factor of ten. All linear relationships, and no magic there, in other words.

For the three drive sizes considered (I dropped the 32 GB size, as I did not find it worthwhile for almost any application any more), the failure times at 10.000 write cycles are 26, 51, and 103 days for 64, 128 and 256 GB respectively. For TLC memory, at only 1000 cycles, the times are thus also a tenth; 2.6, 5.1 and 10.3 days.

If you were to conduct a stress test of drives from different manufactures, these numbers would be interesting. You could for example do the write, check and remove operations continuously till you start to see errors on the data written. However, as read speeds are typically around the same as write speeds for most SSDs, it would actually take at least twice as long as the points in these graphs. (The remove operation also has to be factored in, but is only a fraction of full read and write).

Typical usage

For any performance test it is important to understand where the critical failure points are. However, it does still not tell us what will happen on a typical home user system. A typical consumer would not fill up his whole drive multiple times a day, only to remove it all and start over. So how best to simulate typical user behaviour. Well, we could of course just leave the drive in a machine, and run user software over many years to see what happens. That would not be practical, as we'd never get any useful results in a reasonable time. So, we're left with estimates, but at a different write speed than the stress test above.

How much would a typical user write to his disk? There will be different use cases of course, but let's assume two scenarios: a low to medium use case, where 1 GB is written every day, and a heavy home user who writes 1 GB an hour, every day (although, even that is probably beyond what could be labelled as consumer usage). At this point, a table of the different speeds and units comes in handy, so we can wrap our head around the numbers. It then becomes clear how extreme the 250 MB/s stress test actually is, as it will fill up a 64 GB disk 337 times over in 24 hours (250 MB/s * (24*60*60) second = 21600 GB. And 21600 / 64 GB = 337.5 times).

| MBit/s | MByte/s | MByte/hour | GByte/day | GByte/year | |

| SATA3 max speed | 6000 | 750 | 2700000 | 64800 | 23652000 |

| Stress test | 2000 | 250 | 900000 | 21600 | 7884000 |

| Heavy use | 2.2222 | 0.2778 | 1000 | 24 | 8760 |

| Low/Medium use | 0.0926 | 0.0116 | 41.67 | 1 | 365 |

Now for some graphs. You'll have to watch them carefully as the plotted lines are all the same, the y-axis are all the same, the disk sizes are the same, and the only parameters changing are write speed; 1 GB vs. 24 GB a day, and cell cycle life span; 10k vs. 1k. And watch out for the x-axis which are now in years, instead of days above. The first graph shows 10k write cycle disks, where 1 GB is written every day. The smallest disk, at 64 GB, will then last for 1524 years!

Can that be right, you ask? There must be some a mistake in the numbers somewhere? Well, let's do a quick check to see if it matches Deininger's graphs: First, his plots were in days, so 1524 years makes 1524 * 365 = 556260 days. Next, the ratio between 6 GBit/s and 1 GByte / day we get from the table above: 64800 (GB / day). Finally, In his first graph, he considered 100k write cycle disks, so we multiply by a factor of 10. Plug in the numbers: 556260 / 64800 * 10 = 86. Exactly matching 86 days for the 64 GB disk at 100k cycles in his first graph. The math works out.

Even in the most unrealistic use case, where a 64 GB drive rated for 1000 write cycles (TLC memory) is filled up almost three times per week, it will last more than six years before the first dead memory cells are likely to show. Moving to a MLC based drive at 10k (still consumer grade), the time to failure moves to 63 years, most likely far outlasting the system it was hosted in, or maybe even the consumer who bought it.

(For the Gnuplot scripts to generate all the graphs above, please see this file).

Conclusion

So will Sold State Drives last till the end of time? Of course not! In fact, plenty of other components are prone to fail just the same way as in old HDDs: Capacitors are infamous for their short lifespan; solder joins might crack. The important point is, it is not the memory cells which are likely to fail first, even under the most extreme use.

Still, it makes sense to deploy tools fit for purpose: An enterprise drive drive using SLC memory, with 100k or 1M write cycles will leave all doubts behind. There will be no need to consider special use cases or take special precautions (beyond normal backup and security procedures which should be in place regardless of drive type). For the home user, the same is true: Even the smallest drives with shortest cell lifespan will not fail under normal use.

More specifically, there are no problems or worries with

- using ext3, ext4 or other journalling file systems on an SSD.

- storing /tmp or logs on the SSD.

- using an SSD partition for memory swap.

- any normal consumer usage pattern.

In summary: Exchanging the old spinning disk with solid state will pose no extra risk of data loss. It will of course not reduce the risk of loss from other threats either, so normal backup and security procedures should always be in place.